Build Systems That Improve Themselves: The Flywheel Effect for AI Workflows

Most AI workflows are linear. You build a prompt, run it, get output, done. The next time you need the same thing, you start from scratch. Maybe you save the prompt somewhere. Maybe you don’t.

A flywheel is different. Every cycle produces output AND improves the system that produced it. The more you run it, the better it gets. After enough cycles, the system generates more value than you put in.

Here’s how to build one.

What Makes a Flywheel

A flywheel has three properties that separate it from a regular workflow:

Feedback loops. Output from one step feeds back into an earlier step. A content pipeline where editors score articles and those scores update the writing instructions is a feedback loop. A deployment script that logs failures and adds the failure patterns to its validation checks is a feedback loop.

Compounding returns. Each cycle makes the next cycle easier, faster, or higher quality. Not linearly, but cumulatively. The tenth cycle isn’t just 10% better than the first; it’s built on the improvements from all nine previous cycles. In our content pipeline, the first 10 articles took about 45 minutes each to edit. After 40+ articles refined the writer instructions, editing time dropped to under 20 minutes with fewer issues to fix.

Self-generated fuel. The system produces its own inputs. A content pipeline that spawns new article ideas while writing current articles never runs out of material. An agent system where each completed task generates data for improving future task handling never runs out of training signal.

The Litmus Test

Anatomy of a Real Flywheel

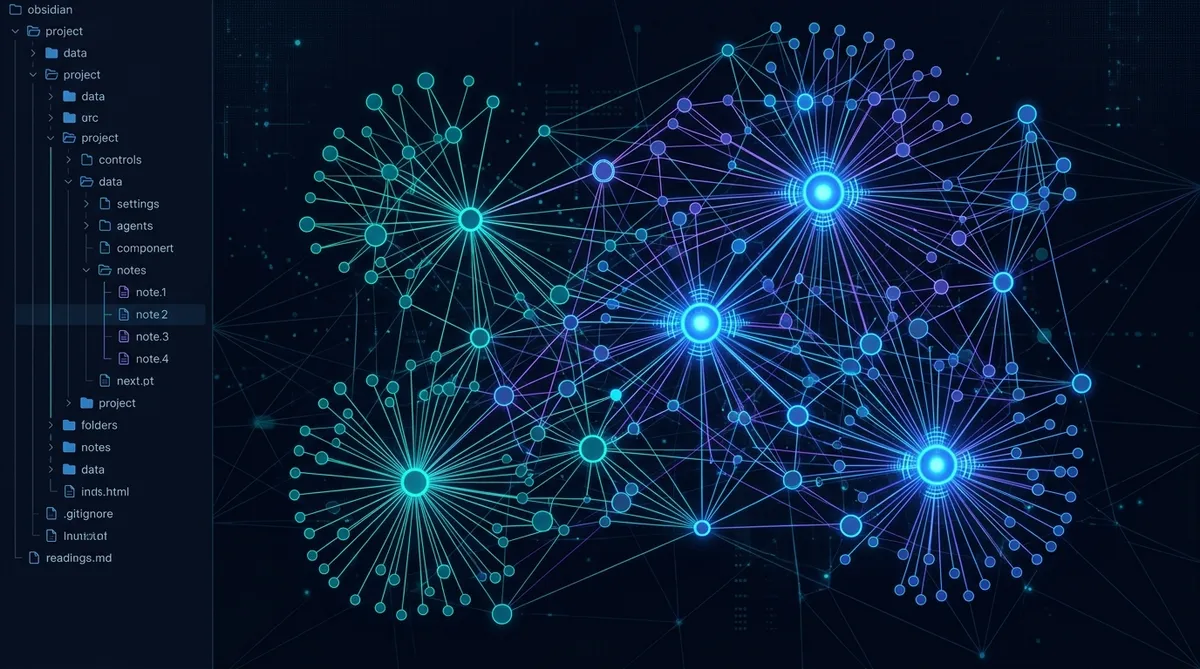

We run a content pipeline that publishes articles across multiple sites. Here’s how the flywheel works in practice:

The cycle:

- Curator selects raw content ideas and shapes them into briefs

- Writer produces a first draft following role instructions and a brand voice profile

- Writer self-scores the draft against a rubric (six dimensions, brand-specific weights)

- Editor runs four editing passes, then scores the edited version against the same rubric

- The score delta (before vs. after editing) reveals where the writer’s instructions are weak

- Instructions get updated to address the weak spots

- Spawned ideas from writing and editing feed new briefs back to the curator

- Next cycle starts with better instructions and a fuller idea queue

Why Scoring Is the Engine

Every article we publish improves the system that wrote it. After 40+ articles through this pipeline, the writer instructions have been refined a dozen times. Common failure patterns (supply list mismatches, padding sections, repeated content elements) became checklist items that prevent them from recurring. That’s compounding.

The Five Feedback Loops

Any system can become a flywheel by adding the right feedback loops. Here are the five patterns that show up most often in AI workflows:

1. Output-to-Instruction Loop

What the AI produces feeds back into how you instruct it. If your coding agent keeps making the same type of error, add that error pattern to its instructions. If your writing agent drifts from the desired voice, tighten the voice profile with specific examples of the drift. Before we added this loop, our writer produced articles that needed 8-12 edits per piece. After six rounds of instruction updates targeting the recurring patterns, that dropped to 2-4 edits.

Instruction Improvement Prompt

Here's the output you just produced: [paste output] Here are the issues I found: [list issues] Update the instruction set to prevent these specific issues in future outputs. Add concrete rules, not vague guidelines. Each rule should be testable: someone reading the output could verify whether the rule was followed.

2. Error-to-Validation Loop

Failures become guardrails. Every time something breaks, the fix isn’t just patching the current problem; it’s adding a check that catches that class of problem in all future runs.

This is how deployment pipelines mature. First deploy: manual. After the first failure: add a build check. After the second failure: add a preview step. After the third: add rollback automation. Each failure makes the system more robust.

3. Spawn Loop

Work generates more work. Not busywork, but genuine new inputs. While writing an article about AI benchmarks, you realize there’s a separate article about pricing models. While building an agent workflow, you notice a reusable component that could be its own tool. Capture these systematically. Our last batch of 4 articles spawned 12 new content ideas across three brands. That’s a 3:1 spawn rate, meaning we’ll never run out of material as long as we keep producing.

- Every completed task should ask: “What new tasks did this reveal?”

- Spawned items go into a central intake queue, not scattered notes

- Review spawned items during the next planning cycle

- Track spawn rate as a health metric (zero spawns = stale system)

4. Usage-to-Priority Loop

What gets used most should get optimized first. If your agent team runs a code review workflow 50 times a week and a documentation workflow twice a month, invest your improvement cycles in code review. Usage data drives prioritization.

5. Cross-System Learning Loop

Lessons from one system transfer to others. A validation pattern that works in your deploy pipeline might apply to your content pipeline. An instruction design pattern from your writing agent might improve your coding agent. We added a “consistency audit” step to our editor pass (checking that supply lists match examples, claimed counts match actual counts) after catching similar mismatch bugs in our deploy validation. Same class of problem, different domain. Build bridges between your systems, not silos.

When Flywheels Stall

Flywheels lose momentum for three common reasons:

Feedback stops flowing. You stop scoring, stop reviewing, stop measuring. The system keeps running but stops improving. This is the most common failure. Fix it by making feedback a required step in the workflow, not an optional one.

The input queue dries up. No new ideas, no new tasks, no fuel. This usually means the spawn loop broke. Check whether completed work is generating new inputs. If not, add an explicit “what did this reveal?” step.

Improvement debt accumulates. You have a backlog of known issues and instruction updates that never get applied. The system is running on stale rules. Fix it by batching improvements: after every 5-10 cycles, stop and apply all pending updates before continuing. We do this at the end of every content batch. Four articles through the pipeline, then a half-hour applying the lessons before the next batch starts.

The Biggest Stall Risk

Building Your First Flywheel

You don’t need to architect a complex system from scratch. Start with any existing workflow and add one feedback loop.

Flywheel Design Prompt

Here's a workflow I run regularly: [describe your workflow steps] Help me add a feedback loop that makes this workflow improve over time. For each step, identify: 1. What output could feed back into an earlier step? 2. What measurement would show whether the system is improving? 3. What's the minimum viable feedback mechanism I could add today?

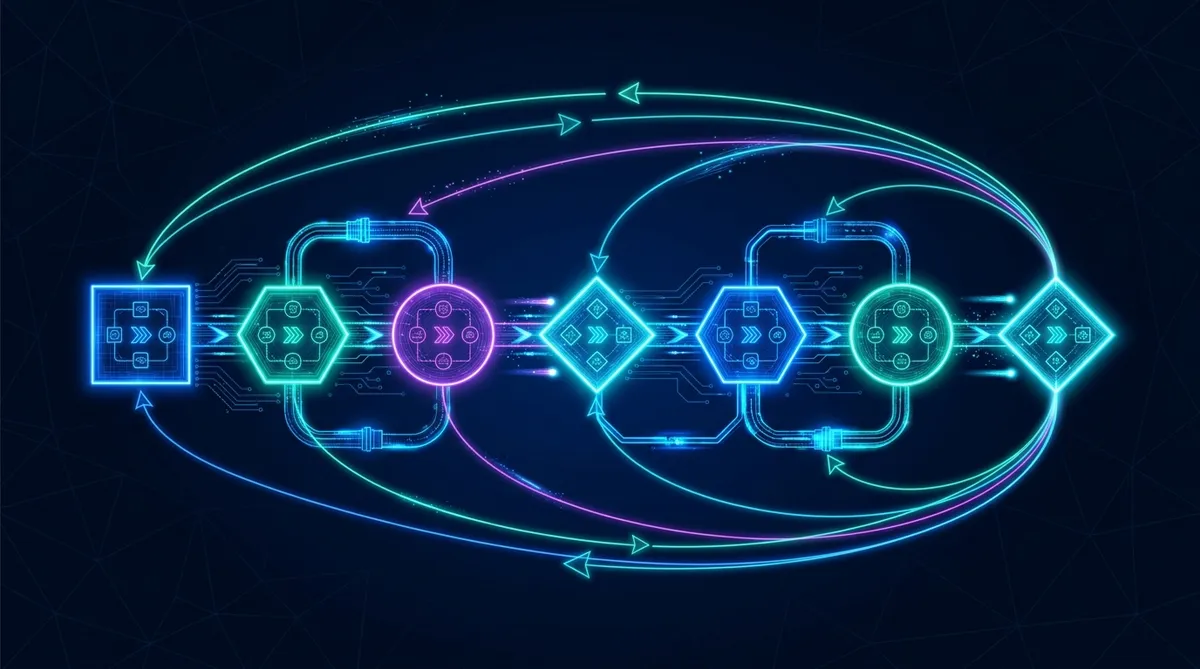

The progression looks like this:

- Start with a working linear workflow

- Add measurement (score, count, rate, or time something)

- Add a feedback path (measurement results update instructions/rules/configs)

- Add a spawn mechanism (each cycle generates inputs for future cycles)

- Run 5-10 cycles and check: is cycle 10 measurably better than cycle 1?

If you want to go deeper on the systems thinking that makes flywheels work, check out Systems Thinking for AI Power Users for the broader framework.

The Compounding Payoff

Linear workflows scale linearly. You put in X effort, you get X output. Flywheels scale exponentially. The effort you put into cycle 1 pays dividends in every subsequent cycle. After enough cycles, the system produces more than you could by running the workflow manually, even if you ran it all day.

That’s not efficiency. That’s leverage.

Pick one workflow you run at least weekly. Add one feedback loop. Measure one thing. Run five cycles and compare cycle 1 to cycle 5. That’s your proof of concept. The flywheel earns its own expansion from there.

Share this article

If this helped, pass it along.