How We Set Up AI Agents to Review Each Other's Code

You don’t let a developer review their own pull request. The same principle applies to AI agents.

When a single agent writes code and then checks its own work, it carries forward every assumption, shortcut, and blind spot from the implementation. It knows what it meant to do, so it sees what it meant to do. That’s confirmation bias, and it’s just as real in AI context windows as it is in human brains.

We run a two-agent pattern where one agent implements and a completely separate agent reviews in a fresh context. Here’s the setup, what it catches, and when the overhead is worth it.

Why Self-Review Fails

When Claude Code writes a function and then you ask it to review that function in the same conversation, it has full context on every decision it made. Why it chose that data structure. Why it handled that edge case the way it did. Why it skipped error handling on that one path.

That context is the problem. The agent “remembers” its reasoning, so it evaluates the code against its intentions rather than against the actual behavior. A missing null check looks fine because the agent knows the input will never be null in the current use case. A hardcoded value looks fine because the agent remembers why it picked that number.

A fresh agent sees none of that. It sees code. It evaluates what the code does, not what someone meant it to do. That gap between intention and implementation is where bugs live.

The Fresh Eyes Principle

The Two-Agent Architecture

Our setup uses Claude Code (CC) as the implementer and a separate agent (dispatched via Codex or OpenClaw) as the reviewer. The key constraint: the reviewer never sees the implementer’s conversation, reasoning, or instructions. It gets the code and a brief description of what the code is supposed to do.

Implementer (CC):

- Full tool access: file read/write, git, terminal, build tools

- Works from a spec or task description

- Writes the code, runs tests, commits to a branch

- Produces a handoff summary: what changed, what files, what the expected behavior is

Reviewer (separate agent):

- Read-only access: can read files and run tests, cannot modify code or push

- Gets ONLY: the diff (or changed files) and a one-line description of the intended behavior

- Does NOT get: the spec, the implementer’s conversation, the reasoning behind decisions

- Produces: a review with findings categorized by severity

Why Read-Only Matters

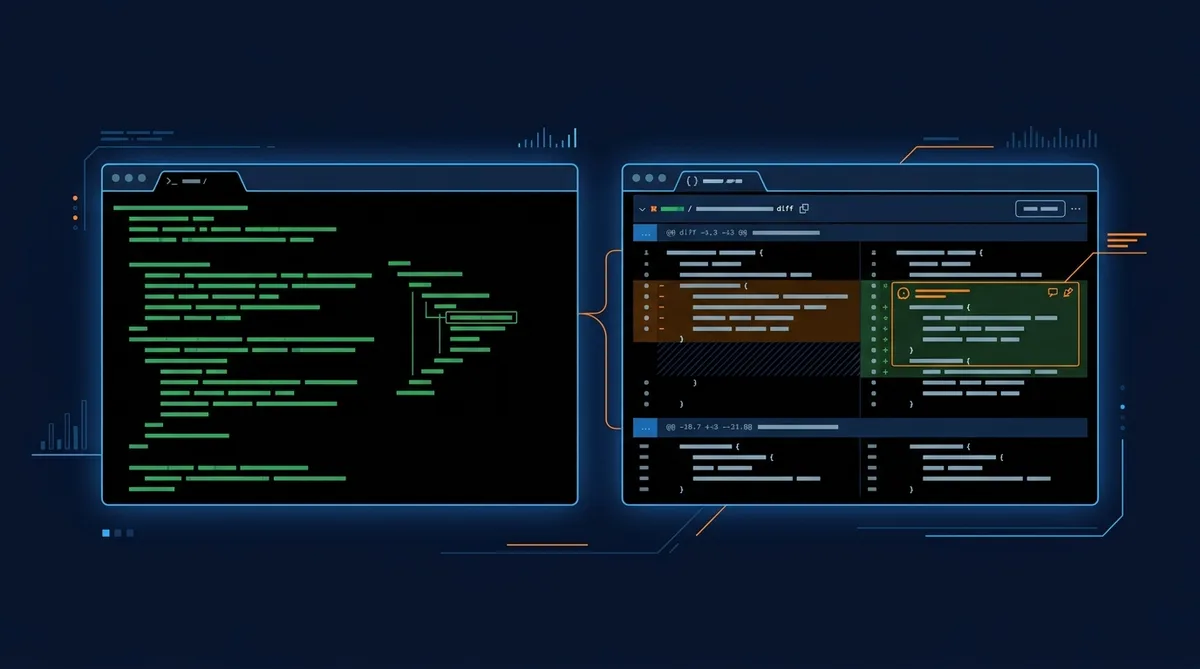

Setting Up the Handoff

The handoff between agents is the critical design point. Too much context and the reviewer inherits the implementer’s biases. Too little and it can’t distinguish intentional patterns from mistakes.

What to include in the handoff:

- The diff or list of changed files

- A one-line description of the feature or fix (“Add retry logic to the deploy script”)

- The test command to run (“npm test” or “pytest tests/”)

- Any relevant architectural constraints (“We use SQLite, not Postgres”)

What to deliberately exclude:

- The full spec or requirements doc

- The implementer’s conversation or reasoning

- Explanations of WHY certain approaches were chosen

- Comments like “I wasn’t sure about this part” (biases the review)

Code Review Agent Prompt

You are a code reviewer. Review the following changes for: 1. **Correctness**: Does the code do what the description says? Are there logic errors? 2. **Error handling**: Are failure cases handled? Missing null checks, uncaught exceptions, missing validation? 3. **Hardcoded values**: Are there magic numbers, hardcoded paths, or environment-specific values that should be configurable? 4. **Style consistency**: Does the code match the patterns in the surrounding codebase? 5. **Security**: Any obvious vulnerabilities? SQL injection, unvalidated input, exposed secrets? Description of changes: [one-line description] Changed files: [paste diff or file contents] Report findings as: - CRITICAL: Must fix before merge - WARNING: Should fix, but not a blocker - NOTE: Style or minor improvement suggestion If the code looks clean, say so. Don't invent problems.

What the Reviewer Actually Catches

After running this pattern across dozens of code changes over a few months, certain categories come up consistently that single-agent review misses. Roughly 60% of reviewer findings are legitimate issues the implementer would have shipped without the second look.

Hardcoded Values

The implementer knows the server runs on port 9009 and the deploy path is /var/www/. So it hardcodes them. The reviewer flags every hardcoded value because it doesn’t have the context that makes them feel “obvious.”

This is the most common catch. Roughly a third of review findings are values that should be in config files or environment variables.

Missing Error Handling on “Happy Path” Code

When the implementer builds a feature, it’s mentally running the success case. The API returns data, the file exists, the network is up. The reviewer, approaching cold, immediately asks “what if the API returns an error?” and “what if this file doesn’t exist?”

We’ve caught missing error handlers on file operations, API calls, and database queries that the implementer assumed would always succeed because they always succeeded during development.

Style Drift from the Codebase

After a long implementation session, CC’s coding style can drift from the existing codebase patterns. Maybe it starts using arrow functions where the codebase uses regular functions, or switches from async/await to .then() chains. The reviewer catches these because it’s comparing the new code against the existing files, not against its own preferences.

Logic Bugs in Edge Cases

The implementer tested the feature with typical inputs. The reviewer, reading the code without knowing which inputs were tested, spots branches that handle unusual inputs incorrectly. Off-by-one errors, boundary conditions, and type coercion bugs live here. One example: our implementer wrote a file path concatenation that worked perfectly on Linux but would silently break on Windows due to backslash handling. The reviewer flagged it because it evaluated the code at face value instead of assuming the dev environment was the only environment.

What It Doesn't Catch

When to Use This (And When Not To)

Two-agent review adds overhead: another agent invocation, typically 2-5 minutes per review, another set of findings to triage. For a 30-minute implementation, that’s a 10-15% time increase. It’s not worth it for everything.

Use it when:

- The code change touches infrastructure, deployment, or security-sensitive paths

- The change is complex enough that you wouldn’t rubber-stamp a human PR for it

- You’re building something that will run unattended (agents, cron jobs, automation)

- The implementer worked in a long session where context accumulation is likely

Skip it when:

- The change is a one-line fix with an obvious diff

- You’re iterating rapidly on a prototype that will be rewritten

- The code is already covered by comprehensive tests that passed

- You’re making cosmetic or documentation-only changes

Scaling: Adding Specialized Reviewers

Once the two-agent pattern is working, you can add specialized reviewers for specific concerns:

- Security reviewer: Focused exclusively on input validation, authentication, data exposure, and dependency vulnerabilities. Uses a tighter prompt that ignores style and focuses on attack surfaces.

- Performance reviewer: Focused on query efficiency, unnecessary loops, memory allocation patterns, and caching opportunities.

- Documentation reviewer: Checks whether function signatures, API endpoints, and config changes are reflected in docs.

Each specialized reviewer runs independently with its own prompt and its own restricted focus. The implementer gets back a consolidated set of findings across all reviewers.

Dispatch Multiple Reviewers

I have a code change ready for review. Dispatch three independent reviews: 1. **General review**: Correctness, error handling, style consistency 2. **Security review**: Input validation, authentication, data exposure, dependency risks 3. **Performance review**: Query efficiency, unnecessary computation, caching opportunities Each reviewer should operate independently and not see the other reviewers' findings. Consolidate all findings into a single report sorted by severity. Changed files: [list files] Description: [one-line description]

The pattern extends naturally to any domain where you want independent evaluation: content review (voice match, factual accuracy, SEO), infrastructure review (cost, reliability, scalability), or even design review (accessibility, consistency, responsiveness).

For more on how commands, agents, and skills fit together in these workflows, see Commands vs Agents vs Skills in Claude Code .

The Core Insight

Fresh context is a feature, not a limitation. When you deliberately give a reviewer less information, you get more honest feedback. The implementer’s reasoning is valuable for building. The reviewer’s ignorance is valuable for catching.

Set up the separation. Keep the handoff lean. Let each agent do what it’s best at.

Share this article

If this helped, pass it along.